For thirty years, quantum computing has occupied a peculiar space in the public imagination — perpetually “ten years away,” perpetually promising to change everything, and perpetually failing to arrive. That comfortable distance is gone. In the span of roughly eighteen months — from late 2024 through early 2026 — a cascade of advances has collapsed the gap between quantum theory and quantum reality with a speed that has surprised even the researchers driving it.

The latest breakthroughs in quantum computing are not incremental. A Caltech team published a design showing that Shor’s algorithm — the theoretical weapon capable of breaking virtually all modern encryption — could be run on a machine with as few as 10,000 to 100,000 qubits, orders of magnitude fewer than previous estimates. Google simultaneously announced a tenfold improvement in the efficiency of the same algorithm. Google’s Willow chip crossed a long-sought error-correction threshold. And for the first time, a research team demonstrated verifiable quantum advantage on a problem with genuine scientific relevance.

“The last couple of years have been very, very exciting,” Scott Aaronson, a computer scientist at the University of Texas at Austin, told Discover Magazine. That may be the year’s most carefully calibrated understatement.

What Quantum Computing Actually Is — And Why It’s Hard

Source: https://quantumai.google/whatisqc

Before measuring how far the field has come, it helps to understand why it was hard to begin with.

A classical computer processes information as bits — each one is either a 0 or a 1. A quantum computer uses qubits, which can exist in a state called superposition: simultaneously 0, 1, or any weighted combination of both. Groups of qubits can also become entangled, meaning the state of one is instantly correlated with the state of another regardless of distance. These properties allow a quantum machine to explore vast solution spaces in parallel, making certain classes of problems exponentially easier to solve.

The catch is that qubits are extraordinarily fragile. Temperature fluctuations, stray electromagnetic fields, even vibrations can cause a qubit to decohere — to lose its quantum state and collapse into classical behavior, producing errors. The more qubits you have and the longer you run a computation, the more errors accumulate, until the result is useless.

This is the fundamental tension that has defined the field since its inception: quantum computers are powerful because they exploit quantum phenomena, and they are unreliable for exactly the same reason.

Researchers have spent decades developing error-correcting codes — strategies for encoding a single reliable “logical qubit” across many physical qubits, so that when some of the physical ones go haywire, the logical one stays intact. The challenge is that error correction itself requires additional qubits performing additional operations, which introduces more opportunities for error. For years, the standard estimate was that you needed thousands of physical qubits just to create one reliable logical qubit — and that breaking common encryption would require millions of logical qubits. That math made a useful quantum computer feel comfortably distant.

This is the era researchers call NISQ — Noisy Intermediate-Scale Quantum — a term coined by Caltech physicist John Preskill in 2018 to describe today’s devices: machines with tens to a few hundred noisy qubits, capable of narrow experiments but not general-purpose algorithms. Understanding the latest breakthroughs in quantum computing means understanding what it looks like when the NISQ era begins to end.

Breakthrough #1: Error Correction Finally Works

Latest breakthroughs in quantum computing 2024

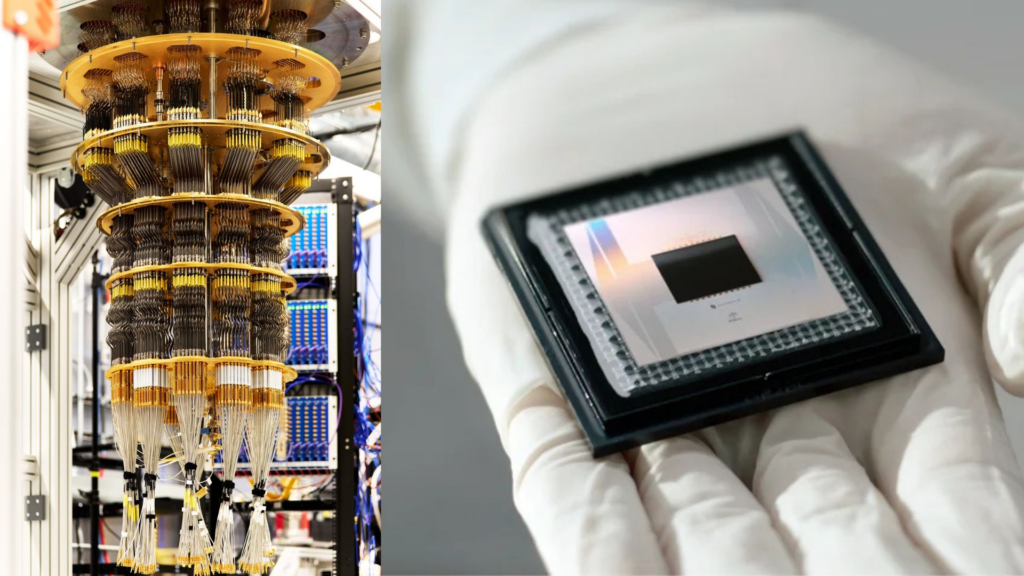

Latest breakthroughs in quantum computing 2024, Google’s quantum AI team unveiled the Willow chip, a 105-qubit superconducting processor. The result it demonstrated was the one the field had been waiting years to see: below-threshold error correction.

The problem with previous error-correcting approaches was circular — adding more physical qubits to improve reliability introduced new error pathways, so systems never actually got more reliable as they scaled. Willow broke that loop. When the team arranged qubits in grids of increasing size — 3×3, 5×5, and 7×7 — using a technique called the surface code, the error rate of the logical qubit fell rather than rose. More qubits meant more accuracy, not more noise.

The system crossed a critical milestone: it corrected errors faster than new ones were introduced. “At that point,” Aaronson explained, “you should be able to stabilize a qubit indefinitely.”

Willow also ran a random circuit sampling benchmark in minutes that would have taken a classical supercomputer an unimaginably long time. The task has no commercial application, but it is unambiguous evidence that quantum hardware can, in carefully chosen circumstances, outperform any classical machine on Earth.

The qLDPC Revolution: Fewer Qubits, More Power

The surface code that powered Willow is reliable but resource-intensive — it typically needs thousands of physical qubits per logical qubit. The latest breakthroughs in quantum computing include a more fundamental rethinking of this overhead.

Quantum low-density parity-check (qLDPC) codes are a family of alternative error-correcting schemes that can pack far more logical qubits into a given physical array. The tradeoff is complexity: where surface codes link each qubit only to its neighbors, qLDPC codes require connections between qubits that are far apart. For most hardware architectures, that is prohibitively difficult. But for neutral atoms — qubits made of individual atoms suspended in laser beams that can be physically moved around an array — it is a natural fit.

In April 2026, a team at Caltech led by physicists Dolev Bluvstein and Madelyn Cain, working with error-correction theorist Qian Xu, quantum theorist Robert Huang, and senior theorist John Preskill, published a paper that combined these trends with stunning effect. Huang and his students recruited a large language model trained on mathematical structures and set it to work optimizing a qLDPC code. The result: a code capable of producing one logical qubit from just four physical atoms, while tolerating 20 to 24 simultaneous catastrophic errors. The previous best-performing qLDPC code needed 12 physical qubits per logical qubit and could handle at most 12 errors.

The LLM also discovered an efficient decoder — the algorithm for diagnosing and correcting errors in real time. “When you do it right,” Preskill told Quanta Magazine, “the answer turns out to be surprisingly encouraging.”

Topological Qubits: A Third Approach

Separately, Quantinuum — working with researchers at Harvard and Caltech — reported one of the first credible experimental realizations of a topological qubit, using their trapped-ion H2 system. Unlike conventional qubits, which store information at a specific physical point, topological qubits encode information in global patterns of the entire system, making them inherently resistant to local disturbances. The experiment was small-scale, but it validated years of theoretical work and represents a potential path to hardware that requires even less error-correction overhead.

Breakthrough #2: A Hardware Race Nobody Has Won Yet

Among the latest breakthroughs in quantum computing, one underappreciated fact is that the hardware competition remains genuinely open. Three distinct architectures are advancing in parallel, each with real strengths.

Neutral Atoms: Flexible and Scalable

Neutral atom systems use individual atoms — often rubidium or cesium — suspended in tightly focused laser beams called optical tweezers. They can be rearranged at will, making them uniquely suited to qLDPC codes that require distant qubit connections. They also tend to hold quantum states longer than superconducting qubits.

The scale of neutral atom systems has grown rapidly. Mikhail Lukin’s lab at Harvard arranged 280 neutral atoms to run sophisticated algorithms in 2023. In 2025, Manuel Endres at Caltech set a new record by demonstrating control over 6,100 neutral atoms simultaneously. While no calculations were performed at that scale, the result established that the platform can reach the qubit counts where fault-tolerant computation begins.

Bluvstein and Cain’s Caltech work is built on this foundation. The startup they formed, Oratomic, is building toward a machine designed specifically to run Shor’s algorithm — not as a distant aspiration, but as a defined engineering target.

Superconducting Qubits: Fast but Fixed

Google and IBM have built their quantum programs on superconducting circuits — loops of metal cooled to within fractions of a degree of absolute zero. These systems operate extremely quickly, with gate speeds measured in nanoseconds. Google’s Willow chip represents the state of the art: 105 qubits with the field’s leading error-correction performance.

The limitation is physical rigidity. Superconducting qubits are fixed in place, like transistors on a chip, making the long-distance connections required for qLDPC codes difficult and expensive. Connecting distant qubits requires routing through intermediate qubits, adding overhead and opportunities for error.

Trapped Ions: Slow but Precise

Quantinuum’s trapped-ion systems use electrically charged atoms held in electromagnetic fields. They operate far more slowly than superconducting chips, but with notably higher accuracy. Quantinuum’s H2-1 device offers 56 fully connected qubits, meaning any qubit can interact with any other directly — a significant architectural advantage.

In November 2025, Quantinuum used its trapped-ion hardware to simulate the Fermi-Hubbard model, a foundational problem in condensed matter physics that describes electron interactions in materials. The result would have been near-impossible to replicate classically in a reasonable timeframe. Researchers consider room-temperature superconductors — which such simulations could help design — “arguably the greatest challenge in condensed matter physics.” This is what verifiable quantum advantage starts to look like.

“It’s surprising to me that you still have these very, very different architectures with complementary strengths and weaknesses,” Aaronson noted. “We don’t know yet which of them will be the best or the least expensive way to scale up.”

That uncertainty, paradoxically, is good news. Multiple serious approaches advancing simultaneously increases the odds that at least one will succeed.

Breakthrough #3: Shor’s Algorithm Gets Frighteningly Efficient

The most consequential of the latest breakthroughs in quantum computing may be the ones that make encryption the most nervous.

Peter Shor’s 1994 algorithm showed that a sufficiently powerful quantum computer could factor large numbers — the mathematical foundation of RSA encryption — exponentially faster than any classical machine. It also threatened elliptic curve cryptography (ECC), a second widely-used scheme. For three decades, “sufficiently powerful” meant a machine so enormous it could be dismissed. That comfortable buffer has now been sharply compressed.

The Caltech Paper: Targeting 10,000 Qubits

Bluvstein and Cain arrived at Caltech in mid-2025 with a specific question: what is the smallest quantum computer one could realistically envision building that would be powerful enough to hack a Bitcoin wallet? The answer they arrived at was not millions of qubits or even hundreds of thousands.

Using their optimized qLDPC codes and neutral-atom architecture, the team simulated machine designs of various sizes and projected their performance against real encryption standards. An array of 10,000 atoms could break ECC encryption in roughly three years. Scale to 26,000 atoms, and that falls to a matter of days. For RSA, 100,000 atoms could accomplish the task in about three months. The team founded Oratomic to begin building toward these targets.

Google’s 10x Efficiency Gain

On the same day the Caltech paper was published, a team at Google led by Craig Gidney released a separate finding: a new implementation of Shor’s algorithm for breaking elliptic curve cryptography that is at least ten times more efficient than the previous best approach. Their estimates suggest that fewer than 500,000 qubits could threaten most cryptocurrencies in minutes.

In a notable first for the field, Google published the result using a zero-knowledge proof — a cryptographic technique that proves a program works without revealing how it works. The decision underscores the sensitivity of the finding: for the first time, quantum researchers are deliberately withholding technical details that could serve as an instruction manual for bad actors.

The Combined Picture

The combined implications of these two papers are stark. Scott Aaronson put it plainly: “When you put together the Google thing with the Caltech thing, Bitcoin could be vulnerable to a quantum computer with only about 25,000 or 30,000 qubits. A year ago, the best estimate would have been in the millions.”

Jeff Thompson, a physicist at Princeton and CEO of neutral-atom startup Logiqal, called Google’s efficiency result “hugely significant.” His conclusion was equally direct: “If you were thinking about when you were going to do a post-quantum crypto transition, you should not be waiting any longer. This is the time to do it.”

Quantum Advantage: The Bar Keeps Moving

Any survey of the latest breakthroughs in quantum computing must contend with the fact that “quantum supremacy” claims have a checkered history. Google made one in 2019 — and was later shown to have overstated the classical difficulty of the task. Since then, various teams have staked similar claims, typically to skepticism.

What researchers now demand is verifiable quantum advantage on problems that actually matter — tasks where quantum results cannot easily be replicated classically and where the answer has practical scientific or commercial value.

By that standard, Quantinuum’s November 2025 Fermi-Hubbard simulation is the strongest candidate yet. The simulation involved numbers that would be prohibitively difficult to compute classically in any reasonable timeframe, and the results bear directly on one of the hardest open problems in materials science. “We’re actually getting reasonable candidates for verifiable quantum supremacy that we can do on current devices,” Aaronson said. “As they scale up the devices, they’re going to be able to do more and more interesting simulations.”

Real-World Applications: Who Benefits First

Even while fault-tolerant quantum computers remain years away, the latest breakthroughs in quantum computing are already reshaping what is possible in specific research domains.

Drug discovery is emerging as one of the earliest beneficiaries. Pasqal, a neutral-atom company, used its processors to model water molecule behavior inside protein pockets — a detail that is extraordinarily difficult to simulate classically but directly affects how drugs bind to their targets. Microsoft’s Azure Quantum Elements platform ran over a million advanced chemistry calculations using hybrid quantum-AI tools, achieving accuracy that classical methods alone could not reach.

Materials science and energy follow closely. The same physics that makes the Fermi-Hubbard model scientifically interesting makes quantum computers natural tools for exploring superconductors, batteries, and catalysts. Collaboration between Riverlane and MIT explored plasma simulation relevant to fusion energy — a domain where classical supercomputers begin to struggle.

AI and machine learning are acquiring quantum building blocks, though modestly. Quantinuum developed a quantum-based natural language model using circuits to represent sentence structure. Terra Quantum built a hybrid quantum neural network for classifying medical images in a federated learning setup where patient data never leaves the hospital. These are early, but they suggest quantum accelerators could eventually sit inside larger AI pipelines, handling sub-tasks that benefit from quantum speedup.

Finance and logistics are running pilot projects around portfolio optimization, risk modeling, and routing — problems whose classical solutions are well-understood but computationally costly. Most work here is still exploratory, but organizations are building the in-house expertise they will need when the hardware matures.

The Cryptography Crisis Is Not Theoretical Anymore

The encryption implications of the latest breakthroughs in quantum computing deserve separate emphasis, because the risk is not symmetrical with the hardware timeline. An adversary does not need to wait until a quantum computer exists to begin exploiting it. The harvest now, decrypt later strategy is already in play: state-level actors may be collecting encrypted data today with the intention of decrypting it once sufficiently powerful quantum machines exist.

Virtually all digital infrastructure — banking, healthcare, government communications, cryptocurrency, personal messaging — relies on RSA or elliptic curve cryptography. Both are now in the crosshairs.

The cryptographic response is underway, but not quickly enough. In 2024, the National Institute of Standards and Technology finalized new post-quantum cryptography (PQC) standards — encryption algorithms based on mathematical problems believed to be hard for quantum computers to solve. The U.S. government has mandated a complete transition to these standards by 2035. Google has set an internal deadline of 2029 to stop using RSA and ECC.

Nikolas Breuckmann, a mathematical physicist at the University of Bristol who studies qLDPC codes, framed the urgency simply: “If you care about privacy or you have secrets, then you better start looking for alternatives.”

What the Skeptics Are Watching

Good technology journalism requires acknowledging the distance between a research paper and a working machine. The most credible voices covering the latest breakthroughs in quantum computing are doing exactly that.

The Caltech design is ambitious on several fronts simultaneously. It assumes the full error-correction cycle — detecting errors, interpreting them, fixing them, replacing stray atoms, and preparing for the next cycle — can be completed in one millisecond. No group has demonstrated this at scale. Thompson offered the clearest benchmark: “I’d like to see a demonstration on a smaller scale, say 100 or 1,000 qubits. Show me that you can do a million rounds or something.” Days or weeks of sustained, error-corrected computation remain undemonstrated.

Mikhail Lukin, who helped build the neutral-atom platform that underpins the Caltech design, offered cautious support while highlighting the complexity of the details: “They are broadly in line with what we and others have estimated. But in these resource estimates, details matter and it is important to work them out carefully.”

Scaling from a few thousand qubits to tens or hundreds of thousands raises additional engineering challenges in fabrication, cooling, control electronics, and calibration. The talent pool fluent in the intersection of physics, computer science, and precision engineering is small globally. And most organizations will continue to access quantum computing through cloud platforms rather than owning hardware, meaning the pace of commercial deployment depends on a handful of companies clearing engineering milestones that remain genuinely hard.

None of this negates what has been achieved. It contextualizes it.

What to Expect, and When

Synthesizing the latest breakthroughs in quantum computing into a forward timeline requires acknowledging real uncertainty, but the broad strokes are clearer than they were even a year ago.

In the near term (2025–2027), expect continued improvements in logical qubit quality and error rates across all three major hardware platforms. Hybrid quantum-classical workflows will enter production use in chemistry and life sciences, where the hardware is already close enough to being useful. Post-quantum cryptographic standards will see wider deployment, especially among financial institutions and governments facing compliance deadlines.

In the medium term (late 2020s), the first demonstrations of fault-tolerant machines running meaningful sustained computations become realistic. Quantum advantage on real scientific and industrial problems — not just carefully chosen benchmarks — will become routine in quantum chemistry and materials simulation. Hardware architectures will likely consolidate, with one or two platforms pulling ahead based on engineering results rather than theoretical projections.

In the longer term (2030s), machines capable of running Shor’s algorithm against real encryption targets become a genuine possibility, contingent on clearing engineering milestones that do not yet have established solutions. Quantum processors begin integrating into cloud infrastructure as specialized accelerators alongside classical hardware.

The honest summary is this: quantum computing’s trajectory has permanently shifted from “decades away” toward “possibly years away,” and the organizations and governments that manage digital security cannot afford to treat the remaining uncertainty as an excuse for inaction.

Conclusion: The Clock Is Running

The researchers at the frontier of the latest breakthroughs in quantum computing are, at their core, physicists with a sense of adventure. They are less interested in breaking Bitcoin than in what a fault-tolerant quantum machine could reveal about the quantum structure of matter, about high-temperature superconductivity, about the nature of space-time itself.

“Pick a cooler life quest than building the world’s first quantum computer with your friends!” Dolev Bluvstein told Quanta Magazine, shortly before his paper went live.

John Preskill, the senior theorist who advised the Caltech team and who coined the term NISQ to describe the era we have spent the last decade in, offered a more measured version of the same sentiment: “We just have to build these machines and see if they work.”

That is the essential truth of where the field stands. The theoretical case is compelling. The engineering path, while demanding, has no known insurmountable obstacles. The timeline has compressed dramatically. The cryptographic implications are urgent and immediate.

The era of quantum computing is not here yet. But for the first time in thirty years, it is measurably close — and the world’s digital infrastructure needs to start moving accordingly.

Key Data Points at a Glance

| Milestone | Status |

|---|---|

| Qubit requirement to break RSA (original estimate) | Billions |

| Qubit requirement (2023 best estimate) | ~1 million |

| Qubit requirement (Caltech, April 2026) | 100,000 (3 months) |

| Qubit requirement to break ECC (Caltech) | 10,000–26,000 |

| Google Shor’s efficiency gain (ECC) | 10× over previous best |

| Google Willow chip qubits | 105 |

| Caltech neutral atom record (Endres lab) | 6,100 simultaneous atoms |

| NIST PQC standards finalized | 2024 |

| U.S. government PQC transition deadline | 2035 |

| Google internal RSA/ECC retirement | 2029 |

Sources: Quanta Magazine (Charlie Wood, April 3, 2026); Discover Magazine (Cody Cottier, April 11, 2026); DenebrixAI (Qamar Mehtab, February 2026); Google Quantum AI; Caltech/Oratomic arXiv preprint (2026)