|

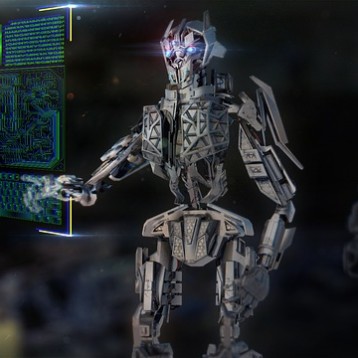

Developed primarily by Atanas Georgiev and Peter K. Allen with software components designed by several others, the Autonomous Vehicle for Exploration and Navigation in Urban Environments (known as AVENUE) uses a modified Real World Interface (now iRobot) ATRV-2 robot as its base. The researchers added a laser range scanner, a GPS receiver, a tilt sensor, a digital compass, and other navigational and imaging tools. All onboard equipment control and processing is handled by a dual core 500 MHz Pentium III with 512MB RAM running Linux.

Some of the more computationally intense processing is performed on a series of seven remote servers which communicate with AVENUE over a wireless link. One server, called the NavServer, is dedicated to localization (positioning) and motion control services for the robot as well as providing a high level interface providing access to the robot for remote hosts. GPS provides the primary source for pinpointing positioning, but an image matching system is also employed when the GPS signal degrades or is unavailable.

|

Path planning algorithms designed by Paul Blaer compute paths based on map diagrams with defined polygonal obstacles to avoid. The polygons are transformed into a series of discrete points by chopping each side into small line segments and a Voronoi diagram of the collection of points is computed. Any edges where one or both end points fall within one of the obstacles are eliminated from the diagram. The remaining edges are all viable paths on the map. A search algorithm finds the closest point to the robot’s starting and desired end position then finds a set of connecting edge lines to generate a viable path from point A to point B.

Once AVENUE arrives at a target position, it uses its onboard sensors to scan the location. The data and images are forwarded to a modeling system that either begins a new model based on the data or incorporates it into a partial model already under construction. After the new information is applied to the model, the planning system determines the next location to gather data and submits it to the path planning algorithm to calculate the next step of the path. The process repeats until a full model of the desired site is completed.

TFOT has previously reported on other robots and assistive devices relying on path planning and automated movements including a brain actuated wheelchair designed to help people with severe motor disabilities, acoustic maps defining obstacles for the blind, a solar powered lawn mowing robot, and Yamaha’s Terrascout Autonomous Vehicle which automatically calculates deviations from assigned paths when unexpected obstacles are encountered.

Read more about AVENUE and some of its component elements at this Columbia University project page.