Catchbot has four wheels, a gimbaled camera and a bumper. Since baseball is partly a perception-based sport, Sugar and McBeath had to find out exactly how a human outfielder sees and reacts when trying to catch a ball. This low-slung robot can detect an object in motion, and independently determine how to move in order to intercept it, basing the decision on a calculation of the object’s relative speed. Catchbot is capable of intercepting ground balls with a .750 fielding percentage, proving that perhaps one day baseball could also be played by robots.

“As the ball moves, the fielder moves to the spot that keeps the ball angle rising,” McBeath says. “We found that insects, birds, bats, and dogs all appear to behave the same way, it seems to be a generic strategy. No matter where a target is coming from, you try to control the relationship between you and the target. If you can see and keep the target moving at a constant speed and pretty much in a straight direction relative to yourself, then interception is guaranteed.”

A major problem facing the research currently conducted on mobile navigating robots is that they have to be operated and directed by humans. This lack of independence might be fixed if the robots use the perceptual information at hand, instead of navigating according to previously-developed maps.

“The goal of robotics folks is to build the best robots possible. The goal of perception people is to model what humans do,” McBeath explains. “This project helps both fields. In perception, we have all kinds of perception-action models. But it’s difficult to prove if the models really do work as advertised in the real world. We are able to do that kind of testing with Catchbot.”

There are yet improvements to be done on Catchbot, as delays in its system were uncovered. Also, the robot’s developers plan on giving it more on-board intelligence, as well as miniaturizing it. “This is a lot bigger than something that just interests two nerdy scientists,” McBeath adds.

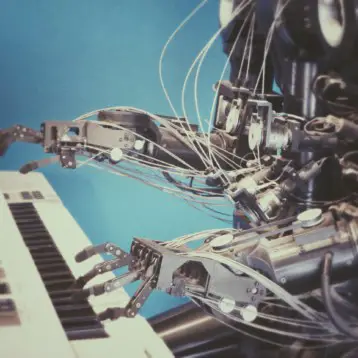

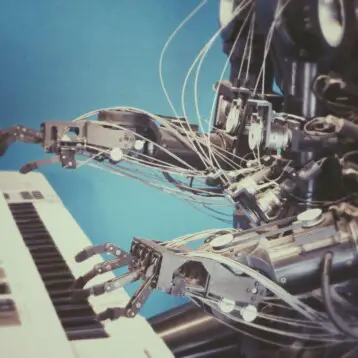

In 2007 TFOT covered the international “RoboCup” competition of that year. The “RoboCup” Annual Challenge, first held in 1997, has shown great developments in the field of robotics and the technology used over the years. TFOT also covered Toyota’s violin-playing robot, which uses precise control and coordination to achieve human-like dexterity, and a revolutionary walking robot named Flame, considered to be one of the most advanced robots of its kind, as it manages to achieve great stability while remaining energy-efficient.

More information about Catchbot can be found at ASU’s official website.

Photo: ASU ground-ball-fielding robot, Catchbot (credit: John C. Phillips; Arizona State University Research Magazine).